10 deepfake detection tools ranked by real-world accuracy

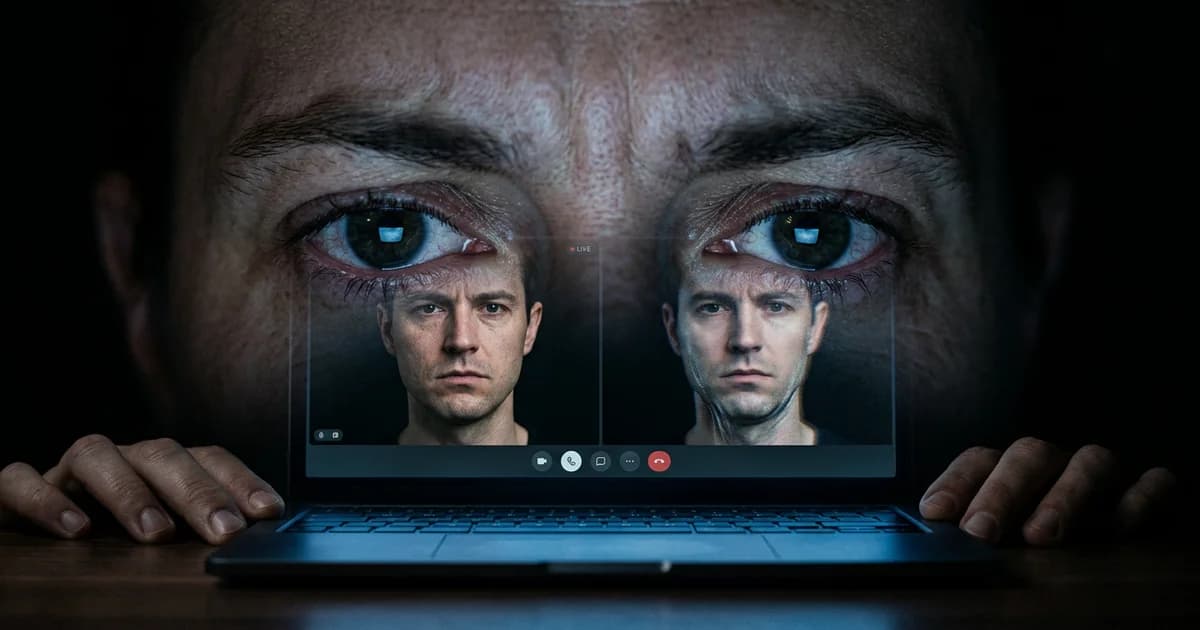

The confidence problem with deepfake detection tools

An iProov study tested thousands of people on their ability to spot deepfakes. Only 0.1% correctly identified every fake and real piece of media shown to them. For high-quality video deepfakes, human detection accuracy drops to 24.5%. Meanwhile, 60% of people believe they could spot a deepfake if they saw one.

That gap between confidence and reality is the same gap many deepfake detection tools exploit. Vendors report 95-98% accuracy in controlled labs, but independent testing reveals a 45-50% accuracy drop against real-world deepfakes. With fraud losses tripling to $1.1 billion in 2025 and businesses losing $500,000 per incident on average, choosing the wrong tool is not just inconvenient. It is expensive.

Here are 10 deepfake detection tools ranked by what they actually deliver.

10-9: The security theater tier

Free browser extensions populate app stores with green checkmarks and red warnings, but most fall below 70% accuracy on current-generation deepfakes. They create false confidence without explaining their reasoning.

Microsoft Video Authenticator analyzed frames for manipulation artifacts and performed well in demos. Then Microsoft quietly stopped promoting it. Never publicly released at scale, it functions more as a research artifact than a practical defense.

8-7: Specialized but limited

Clarifai offers a developer-centric AI suite with deepfake detection capabilities. Flexible and API-friendly, but it is a general-purpose platform first, a detector second. Out-of-the-box accuracy lags behind dedicated solutions. Best for teams with ML expertise who want a customizable foundation.

Amber Authenticate uses cryptographic verification: it hashes original footage at capture so you can verify authenticity later. Genuinely powerful for proving chain of custody, but useless for evaluating suspicious content from external sources, which is the scenario most organizations face when dealing with deepfake voice fraud defenses.

6-5: Strong single-purpose tools

Hive AI bundles deepfake detection with content moderation, handling high-volume scanning across image, video, and audio. It catches obvious fakes fast but can miss sophisticated manipulations. A strong first filter, not a final verdict.

Pindrop Pulse focuses exclusively on audio deepfakes. Voice cloning now requires just three seconds of audio for an 85% voice match, and Pindrop analyzes voice patterns and audio artifacts to detect synthetic speech in real time. Critical for call centers, but it does nothing for video or image deepfakes.

4-3: Enterprise-grade protection

CloudSEK XVigil combines detection with digital risk protection, scanning the open web, dark web, and underground forums for deepfakes targeting your organization before they reach your inbox. This threat-intelligence approach addresses the attack lifecycle that most tools ignore, especially as AI-powered attack speed keeps accelerating. Works best alongside other detection tools rather than standalone.

Intel FakeCatcher analyzes biological signals that deepfake models cannot replicate: subtle blood flow patterns in facial skin (photoplethysmography). It achieves 96% accuracy in controlled settings, dropping to 91% in the real world. The physiology-based approach detects fakes by what is missing (genuine biological signals) rather than what is added, making it harder for attackers to game.

2-1: The leaders

Reality Defender screens video, image, audio, and text in real time, maintaining 95-98% accuracy across all modalities. Most competitors excel in one format and stumble in others. Reality Defender maintains consistency. API integration means it sits inside existing security workflows. Enterprise pricing puts it out of reach for smaller organizations, but for multi-vector attacks where credential-based breach patterns combine with synthetic identity fraud, it offers the broadest protection available.

Sensity AI takes the top position. It matches Reality Defender on accuracy (95-98%) and adds the most comprehensive threat intelligence network in the space, monitoring over 500 deepfake generation platforms to identify emerging techniques before they spread. Sensity provides forensic reports explaining exactly why content was flagged (critical for legal proceedings) and offers SDKs for identity verification workflows, addressing the intersection of deepfakes and AI security vulnerabilities. The combination of detection accuracy, explainability, and proactive threat hunting puts it ahead.

What this ranking actually tells you

The gap between tiers is massive. Free tools give you false confidence. Enterprise platforms invest millions in staying ahead of adversarial techniques. But even the best lose 5-10% accuracy in production versus lab conditions.

No single tool protects you completely. The organizations with the strongest defenses layer multiple approaches: cryptographic verification at capture, AI detection at ingestion, biological signal analysis for high-stakes verification. And they train their teams to stay skeptical even when the tool says clean.

Your first move is not buying the most expensive tool on this list. It is testing your current detection capabilities against modern deepfakes and discovering exactly how exposed you already are.

Related Reading:

Sources and References

- DeepStrike / iProov — Only 0.1% of participants correctly identified all fake and real media; human detection rate for high-quality video deepfakes is just 24.5%, while deepfake-related losses tripled to $1.1 billion in the US in 2025.

- Keepnet Labs / Deloitte Center for Financial Services — AI detection tools lose 45-50% accuracy when tested against real-world deepfakes versus lab conditions; businesses lost an average of $500,000 per deepfake incident in 2024.

- Fritz AI / Sensity / Intel — Sensity AI reports 95-98% accuracy; Intel FakeCatcher achieves ~96% in controlled settings, ~91% real-world; vendor-reported metrics vary significantly between lab and production.

Read about our editorial standards →