Your phone pings reveal more than your location

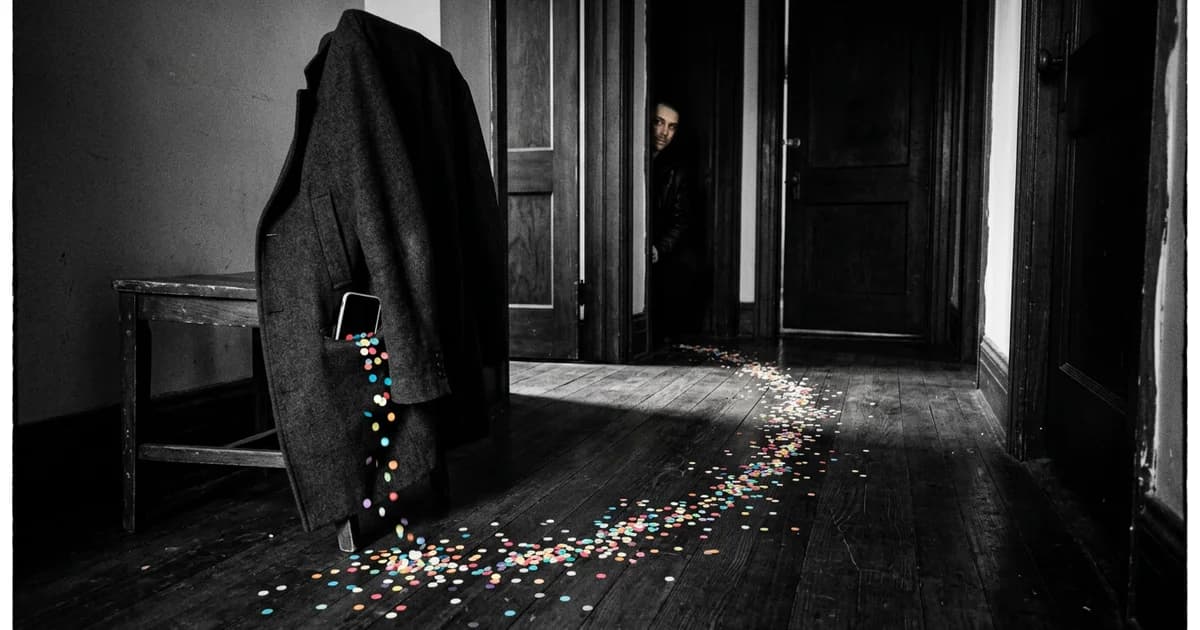

The most revealing data on your phone may not be your messages, photos, or search history. It may be the quiet trail of places your device has visited.

That is the uncomfortable lesson inside the FTC Kochava case. In May 2026, the Federal Trade Commission announced a proposed order that would bar Kochava and its subsidiary Collective Data Solutions from selling or disclosing sensitive location data without affirmative express consent (FTC, May 2026).

The hidden privacy risk is not that an advertiser knows you were near a coffee shop. It is that repeated location signals can turn ordinary movement into inference.

Location data brokers sell context, not just coordinates

A latitude-longitude point looks sterile in a spreadsheet. Add time, repetition, and nearby buildings, and it starts to speak.

The FTC's original 2022 lawsuit against Kochava alleged that precise mobile location data could trace people to reproductive health clinics, places of worship, addiction recovery facilities, domestic violence shelters, and other sensitive locations (FTC, 2022). The point was not merely that a device moved. The point was that movement can imply a medical situation, religious practice, safety concern, or private relationship.

That is why this story is narrower than the broad data-broker market. A macro view tells you that personal data is bought and sold. The Kochava fight shows how a phone ping can become a label.

If a device spends nights at one address, mornings at a school, and Tuesday afternoons near a clinic, a broker does not need your name to create risk. Someone may connect the pattern back to a household, employer, or community.

Location data consent is the real fight

The phrase that matters in the 2026 FTC announcement is affirmative express consent. In plain English: sensitive location data should not be sold or disclosed just because a user clicked through a vague permission screen somewhere upstream.

Many people think of location permission as a single app-level choice: on or off, always or while using. The broker economy is messier. Location signals can pass through software development kits, ad tech pipes, analytics partners, and resale channels that are invisible to the person carrying the phone.

A similar hidden-layer problem appears in The Hidden Trap Inside AI Browser Agents, where the danger is an instruction buried in a webpage. In location brokerage, the hidden layer is commercial context: who receives the signal after the app gets it.

The FTC's Kochava order is therefore not just about one company. It is a signal that regulators are treating sensitive location as a special category of harm.

Why anonymized does not mean harmless

The weakest privacy promise in this category is the idea that removing obvious identifiers solves the problem.

Location is unusually hard to anonymize because it behaves like a fingerprint. Most people have routines. They sleep in one place, work in another, and visit a small set of stores, clinics, schools, homes, and social spaces. Even without a name, a persistent device trail can narrow the field quickly.

That is why a location data broker can create harm without publishing a dossier titled with your identity. The sensitive inference is often enough. A visit pattern near a treatment center, shelter, or religious institution can be meaningful even if the dataset calls the person device 8F3A.

Security teams already understand a version of this problem: boring metadata can become the breach. The same principle appears in AI security panic is missing the boring breach. With phone location, the metadata is the story.

What to check before your next app permission

You cannot personally audit every downstream buyer in the ad tech market. But you can make leakage harder.

Start with apps that request always-on location. Weather, maps, delivery, ride-hailing, and fitness apps may have obvious reasons to ask. A coupon app, casual game, or wallpaper tool deserves more suspicion. If the app's core function does not need persistent location, deny it.

Then check whether your phone lets you share approximate location instead of precise location. That small downgrade can reduce the sensitivity of a ping. It will not fix resale channels by itself, but it weakens the raw material.

Finally, treat privacy labels and consent screens as starting points, not guarantees. If an app depends on advertising, analytics, or data partnerships, the sharper question is whether sensitive location can leave the app's original context.

This also connects to broader consumer trust. As we argued in AI content is losing the authenticity test, the trust contract breaks when users feel disclosure arrived after the extraction.

The Kochava case makes that contract concrete. Your phone does not need to confess your private life. Sometimes it only needs to show where it has been.

Related Reading:

Sources and References

- Federal Trade Commission — May 2026 FTC proposed order would bar Kochava and CDS from selling or disclosing sensitive location data without affirmative express consent.

- Federal Trade Commission — Original 2022 FTC lawsuit alleged precise location data could trace visits to reproductive health clinics, places of worship, and other sensitive locations.

Read about our editorial standards →