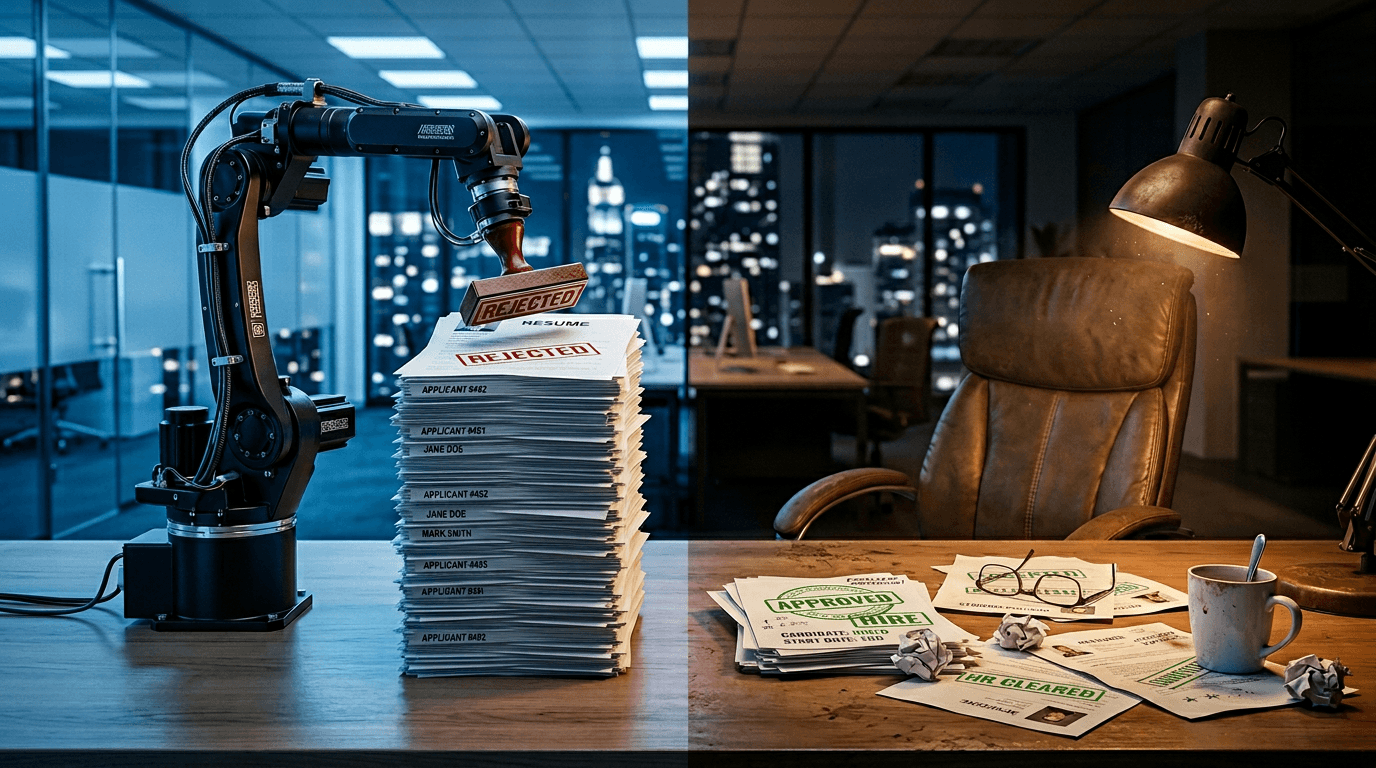

75% of resumes vanish before a human looks: AI screening vs recruiters

You sent 100 applications last month. Statistically, a human being read fewer than 25 of them.

The rest were sorted, scored, and discarded by software before anyone in HR opened a single file. A Harvard Business School study with Accenture identified 27 million "hidden workers" in the U.S. alone: people who are qualified but systematically invisible to hiring algorithms. And 88% of the employers surveyed admitted their own filters were rejecting candidates who could do the job.

This is not a glitch. It is the default.

AI resume screening is not reading your resume

Applicant tracking systems (the software that sits between your application and a recruiter's inbox) do not evaluate talent. They match keywords. Over 99% of Fortune 500 companies use an ATS, according to Select Software Reviews. The average job posting attracts more than 250 candidates. Only four to six of those people will ever sit in an interview chair.

That is a pass rate below 2.5%.

The system runs on exclusion, not selection. Recruiters define keyword filters (76.4% filter by skills, 55.3% by job titles), and anyone who does not match the exact phrasing gets buried. Not rejected in the traditional sense. Just never seen.

Here is the part that breaks the logic: AI-proof skills paying 56% more exist precisely because they resist the kind of rigid keyword matching that ATS platforms depend on. Critical thinking, cross-functional judgment, and adaptive communication do not fit neatly into a Boolean search.

What AI screening catches (and what it completely misses)

AI resume screening excels at speed. It processes hundreds of resumes in the time a human needs for one. Accuracy on hard skills and technical qualifications runs between 89% and 94%, depending on the function.

But the gap shows up in everything that makes hiring actually work. Researchers at the Brookings Institution and the University of Washington tested 554 resumes across nearly 40,000 AI comparisons. The results were damning: AI screeners preferred white-associated names 85% of the time, favored female-associated names only 11% of the time, and never once ranked a Black male-associated name above a white male name when qualifications were identical.

This is not just inefficiency. It is structured exclusion running at scale, with a neutral interface.

The human recruiter problem is not better

Before you conclude that humans should replace the machines, consider this: human recruiters spend an average of 6 to 7 seconds per resume. At 300 applications per week, they rely on visual shortcuts (company names, university brands, job title proximity to headings) rather than actual skill evaluation.

The Harvard study found that 49% of companies automatically eliminate anyone with an employment gap longer than six months. Veterans, caregivers, people with disabilities, career changers: all penalized by a filter that treats a gap as a disqualifier rather than a data point.

Meanwhile, companies regretting AI replacement are discovering that removing human judgment entirely creates different failures. Candidates who pass AI-led interviews succeed in subsequent human interviews at a 53% rate, compared to just 29% for traditional screening. The AI is better at identifying baseline fit, but humans catch what algorithms cannot: unusual career arcs, transferable skills from adjacent industries, and the kind of potential that does not map to a keyword list.

The real cost is invisible

The 75% figure is debated. Some research calls it inflated. An Enhancv study found 92% of recruiters say their ATS does not auto-reject based on formatting alone. The more accurate picture is that ATS platforms sort and rank rather than outright delete, but the practical result is the same: if your resume lands at position 200 out of 250, no human will scroll that far.

The cost falls on both sides. Companies lose access to the 27 million hidden workers Harvard identified. Job seekers spend hours tailoring applications to algorithmic preferences that shift with every platform update. And the emerging legal landscape (California's 2025 AI hiring regulations, the Colorado AI Act effective 2026, the EU AI Act classifying recruitment tools as high-risk) signals that regulators have noticed the damage.

What actually works right now

The broken system will not fix itself through awareness alone. But two immediate actions shift the odds.

First, treat the ATS as a reader, not a judge. Mirror the exact language from the job description in your resume. If the posting says "project management," do not write "led cross-functional initiatives." The algorithm does not do synonyms well.

Second, bypass the system entirely when possible. Referrals account for roughly 30% to 50% of all hires at most companies, despite representing a fraction of total applications. One conversation with someone inside the company is worth more than 50 optimized applications submitted through a portal.

The hiring pipeline was not designed to find the best candidate. It was designed to reduce a pile of 250 to a manageable six. Understanding that distinction is the first step toward working around it, whether you are the one applying or the one building the filter.

Sources and References

- Harvard Business School and Accenture — 27 million hidden workers in the U.S. are systematically excluded by automated hiring filters. 88% of employers admit their ATS rejects qualified candidates.

- Brookings Institution / University of Washington — AI resume screeners preferred white-associated names 85% of the time across 40,000 comparisons.

- Select Software Reviews — 99% of Fortune 500 companies use ATS. Average job posting attracts 250+ candidates, only 4-6 get interviews.

- Harvard Gazette — 49% of companies auto-eliminate candidates with employment gaps over 6 months.

Read about our editorial standards →